A few weeks ago, I walked into my AP Computer Science Principles class and did something that felt a little risky: I handed the conversation over to my students.

Not entirely, of course. I had designed the lesson. I had curated the resources. But the question I put in front of them…

“What policies and practices would make AI beneficial for our learning in this class?”

… that one I genuinely did not know the answer to. And I wanted to find out what they thought.

What followed was one of the most energizing class periods I have had in a long time.

We ran a jigsaw discussion. Students were assigned to one of four groups and asked to read articles and gather key findings.

- Student Reality

- Learning Signs and Risks

- Practical Implementation

- Optimistic Vision

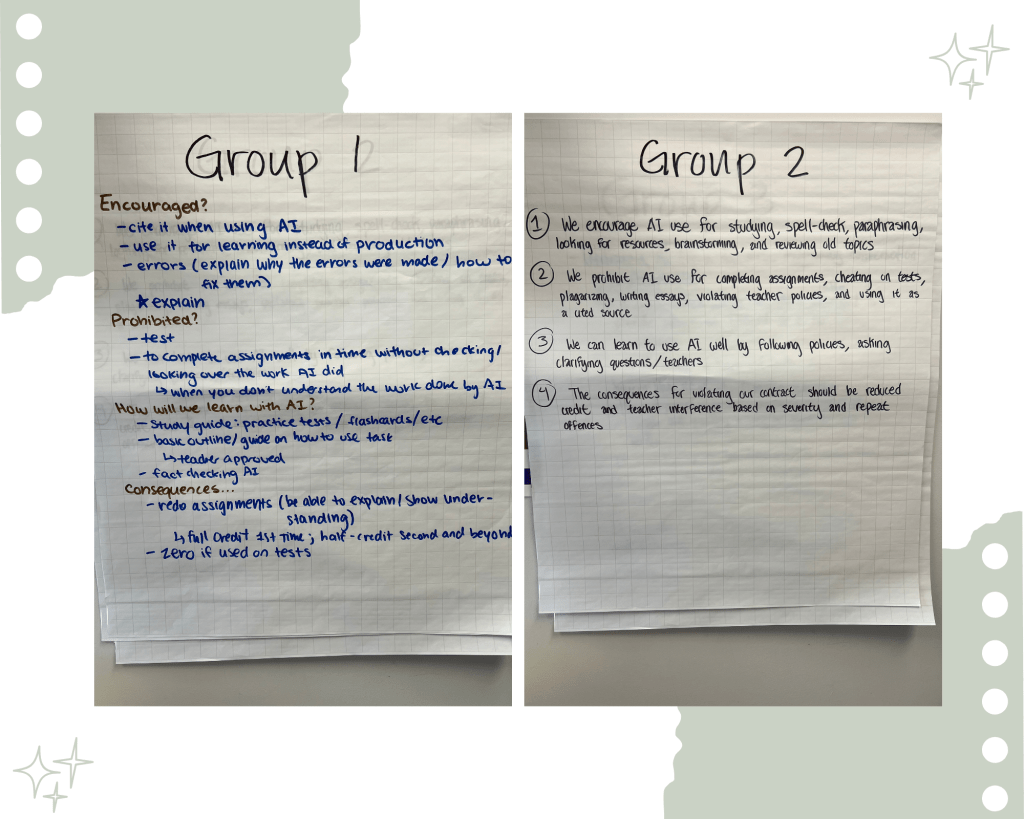

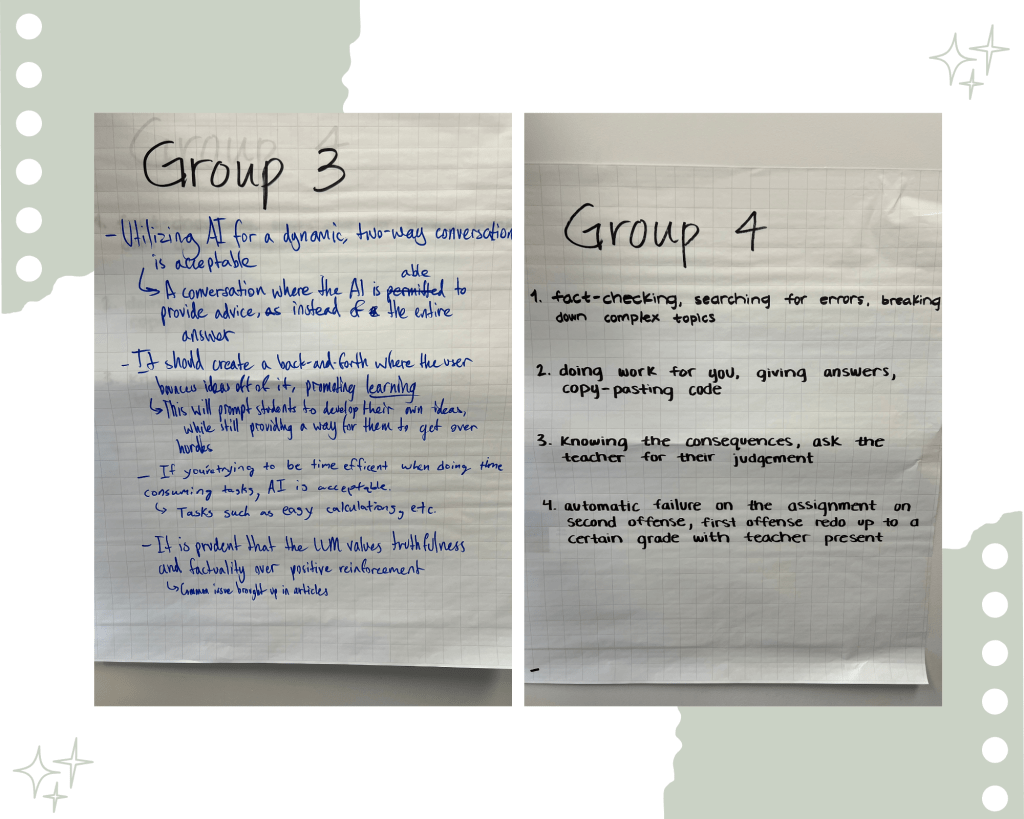

Then they reshuffled into new groups to share their findings across perspectives and draft a recommended AI policy together. Finally, we came together as a whole class to build a common set of agreements.

Click here to view the lesson and resources used

By the end of the period, my students had produced something I was genuinely proud of. They had determined, on their own, that AI should be used as a sparring partner, not a butler. That it is encouraged for debugging, brainstorming, studying, and understanding concepts, but prohibited when it replaces the thinking process entirely. They decided that there should be consequences for misuse, that they needed to cite AI the same way they cite a source, and that they should always ask before using it. They didn’t need me to tell them any of this. They figured it out.

That moment told me everything I need to know about where we need to go as educators.

As an educator, I feel we are at a crossroads. I strongly believe AI is here to stay and our students are using it everyday. Now we have the choice to make it the big ugly monster or teach them how to use it ways that enhance their learning.

When calculators entered classrooms, there was significant resistance. Many teachers feared students would stop thinking, stop developing number sense, stop doing the hard cognitive work of arithmetic. And honestly? Some of those fears were not unfounded. There are real downsides. But here is what also happened: students with math learning disabilities gained access to grade-level content that had previously been out of reach (University of Minnesota, Institute on Community Integration — Accommodations Toolkit: Calculator Research). Students could explore more abstract and complex mathematical ideas without getting stuck in tedious computation. We could spend more class time on why a concept works rather than just how to carry it out. Math educators leaned in, adapted, and made it work… because we saw the benefit.

The same story played out with the internet. With Desmos. With graphing calculators. With every piece of technology that educators first feared and then figured out how to use well.

AI is not different. It is just louder.

Here is the reality: students are already using AI. According to a 2025 Pew Research study, the share of U.S. teens using ChatGPT for schoolwork doubled in just one year, from 13% to 26%, with nearly a third of 11th and 12th graders using it regularly. They were using it before we designed a policy. They are using it tonight to help them with homework. The question is never whether they will use it; the question is whether we are going to help them use it well, or leave them to figure it out on their own with no guidance, no ethical framework, and no understanding of its limitations.

Burying our heads in the sand is not neutrality. It is abdication.

As educators, we have a responsibility: not just to teach content, but to prepare students for the world they are actually going to live and work in. And in that world, AI literacy is not optional. Knowing when to use AI, when to trust it, how to verify it, how to cite it, and when to put it down and think for yourself … these are skills. And skills need to be taught.

The lesson I ran with my CSP class was not just about AI. It was about ethical reasoning. About weighing competing perspectives. About building consensus. About learning to disagree productively and still arrive at something meaningful together. These are restorative practices IIRP, 2009). These are thinking classroom skills (peterliljedahl.com). And AI as a topic, as a tool, as a question, gave us the perfect vehicle to practice all of them.

There are real risks. I am not dismissing them. There are students who will use AI to avoid thinking, and that avoidance has real costs for their learning and development. There are equity concerns about access and about who benefits. There is misinformation. There is the very real danger of students confusing fluency with understanding.

But these risks are not reasons to turn away. They are reasons to lean in harder. A 2025 Gallup survey found that only 1 in 5 teachers works at a school with an AI policy, and nearly 70% received no training on AI tools whatsoever. That is not caution. That is a vacuum and students are filling it on their own.

When my students concluded that AI use should always be accompanied by a thought process, that copying and pasting is prohibited, that assessments should remain AI-free, that anything which curtails problem-solving is off-limits, they were doing exactly what we want students to do. They were grappling with a hard question, gathering evidence, and making a principled argument. That is the work of education.

I do not have all the answers about what the right AI policy is. No one does yet. And that is actually the point. We are all figuring this out together… teachers, students, schools, and society at large.

But I know this: the classroom is exactly where this conversation should happen. Not after students have already formed their habits. Not once the technology has moved so far ahead of us that we are just playing catch-up. Now! With our students. In community. With care, with curiosity, and with the belief that they are capable of thinking through hard things, because they are.

They showed me that a few weeks ago.

I hope they keep showing me.